How to Handle Corrupted or Malformed PDFs in Go Pipelines

PDF corruption is a production problem, not a desktop inconvenience. When your Go service processes documents at scale - statements, contracts, forms, invoices — some of those files will be malformed. They arrive broken from legacy systems, third-party exporters, incomplete uploads, or scanner firmware that only partially implements the PDF spec. Your pipeline needs to handle them reliably without crashing or silently producing bad output.

This guide covers how to detect, normalize, and recover from corrupted or malformed PDFs programmatically in Go, using UniPDF and Ghostscript as your primary tools.

Note on code examples: The snippets in this article are illustrative starting points. Before deploying to production, add appropriate logging, input validation, timeout handling, and security review for your specific environment. Full runnable examples are available in the UniDoc GitHub examples repository.

Why PDF Corruption Happens in Production Pipelines

In any document workflow processing PDFs at volume, malformed files are routine, not exceptional. They typically come from:

- Incomplete uploads or network interruptions during file transfer

- Legacy enterprise systems exporting PDFs that only partially conform to the spec

- Third-party generators that interpret the PDF specification differently from Adobe Acrobat

- Scanner firmware with incomplete spec implementations

- Files truncated or partially overwritten in storage

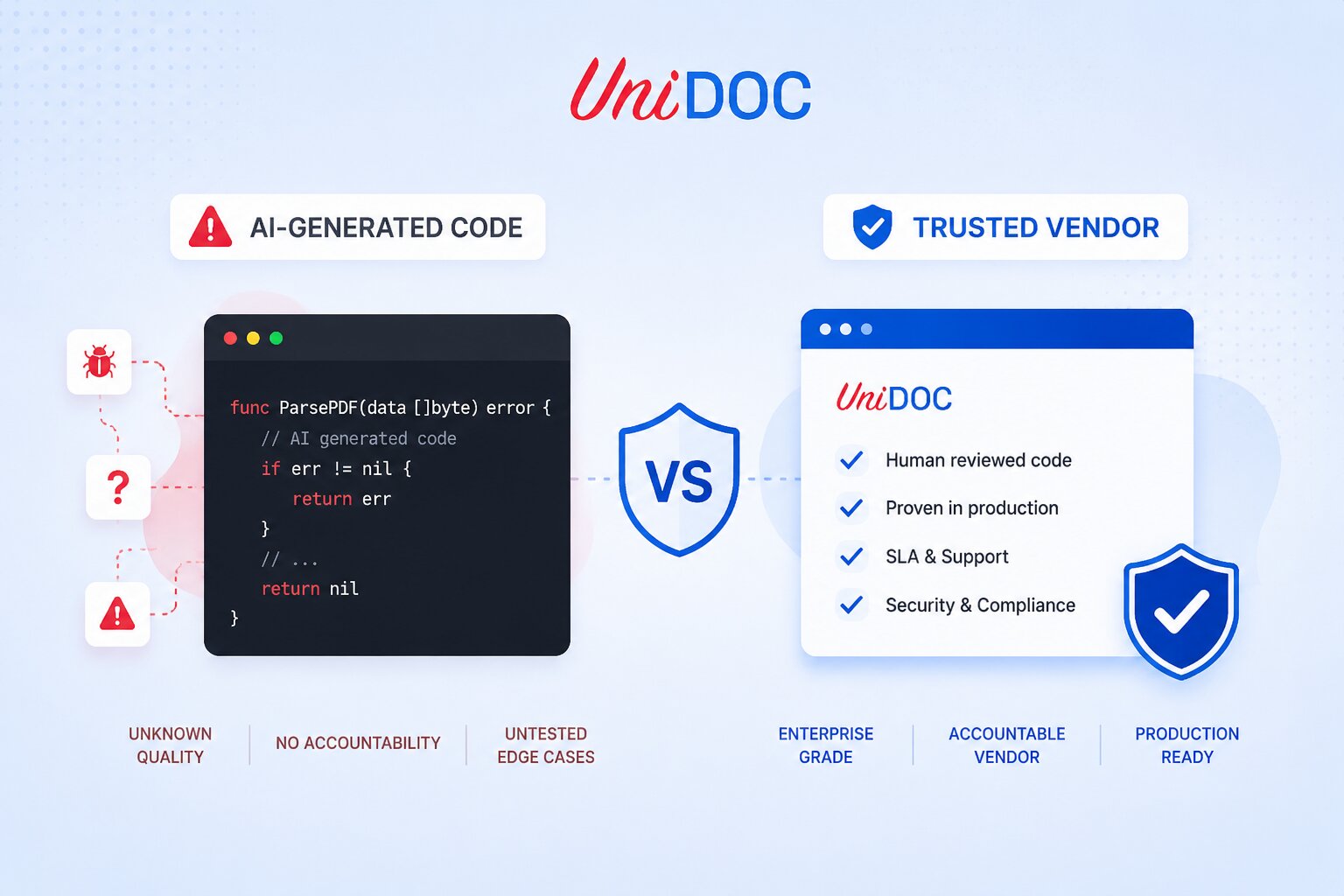

A library that has only seen well-formed PDFs will fail on these. A library with years of production exposure across enterprise document workflows has built handling for most of these variations. That accumulated edge case coverage is what separates a production-grade library from one assembled last week.

A Note on Manual Recovery

For a one-off file that needs a quick fix outside of a pipeline, tools like Adobe Acrobat (File → Save As triggers a repair pass) or cloud backup version history (Google Drive, Dropbox) can recover minor corruption without engineering effort. These are reasonable starting points for a single broken file.

For automated pipelines processing PDFs at scale, you need a programmatic approach. The rest of this article covers that.

Step 1: Detect Corruption Early at Ingestion

The best time to catch a bad file is at ingestion, before it enters your workflow and causes a failure downstream. UniPDF returns a clear error when it encounters a file it cannot parse, which gives your pipeline a decision point: reject, quarantine, normalize, or route to manual review.

Licensing note: UniPDF requires a license key to operate. You can get a free metered API key at cloud.unidoc.io. For regulated or air-gapped environments, an offline key is also available at unidoc.io/free-trial with zero network calls and local validation only.

package main

import (

"fmt"

"os"

"github.com/unidoc/unipdf/v4/common/license"

"github.com/unidoc/unipdf/v4/model"

)

func init() {

err := license.SetMeteredKey(os.Getenv("UNIDOC_LICENSE_API_KEY"))

if err != nil {

panic(err)

}

}

func loadPDF(path string) (*model.PdfReader, error) {

f, err := os.Open(path)

if err != nil {

return nil, fmt.Errorf("could not open file: %w", err)

}

defer f.Close()

r, err := model.NewPdfReader(f)

if err != nil {

// Parsing failed — file likely needs normalization before processing

return nil, fmt.Errorf("PDF parsing failed (possible corruption): %w", err)

}

isEncrypted, err := r.IsEncrypted()

if err != nil {

return nil, fmt.Errorf("could not check encryption status: %w", err)

}

if isEncrypted {

return nil, fmt.Errorf("PDF is encrypted; decrypt before processing")

}

numPages, err := r.GetNumPages()

if err != nil || numPages == 0 {

return nil, fmt.Errorf("PDF has no readable pages; may be deeply corrupted")

}

return r, nil

}

When NewPdfReader fails, that is your signal: the file needs normalization before it can be processed further. A zero page count after successful parsing is a secondary signal — structurally readable but effectively empty, and often a sign of deeper damage.

Step 2: Normalize Malformed PDFs with Ghostscript

Ghostscript is the most reliable first-pass tool for structurally damaged PDFs. It rewrites the file into a clean, spec-compliant output, repairing broken cross-reference tables, unclosed object streams, and structural problems that PDF readers cannot recover from on their own.

gs -o normalized.pdf \

-sDEVICE=pdfwrite \

-dSAFER \

-dNOPAUSE \

-dBATCH \

corrupted.pdf

After normalization, retry loading normalized.pdf with UniPDF. In most cases, a file that failed to parse before will load cleanly after this step.

You can integrate Ghostscript normalization directly into your Go pipeline by shelling out when UniPDF returns a parse error:

import (

"fmt"

"os/exec"

)

func normalizeWithGhostscript(input, output string) error {

cmd := exec.Command("gs",

"-o", output,

"-sDEVICE=pdfwrite",

"-dSAFER",

"-dNOPAUSE",

"-dBATCH",

input,

)

if err := cmd.Run(); err != nil {

return fmt.Errorf("ghostscript normalization failed: %w", err)

}

return nil

}

When to use this: Ghostscript normalization is the recommended first step for files with deep structural problems such as broken xref tables, malformed object streams, or files that fail to open in any viewer.

As of UniPDF v4.9.0, we added a new option for ReaderOpts called ParserAutoRepair. Setting it to true enables UniPDF to perform auto-repair during parsing — handling broken xref tables, missing referenced objects, and malformed object streams — without requiring a Ghostscript dependency.

pdf, err := os.Open(os.Args[1])

if err != nil {

println("Error opening PDF file:", err.Error())

os.Exit(1)

}

defer pdf.Close()

pdfReader, err := model.NewPdfReaderWithOpts(pdf, &model.ReaderOpts{

ParserAutoRepair: true,

})

if err != nil {

println("Error creating PDF reader:", err.Error())

os.Exit(1)

}

Step 3: Rewrite and Re-Save with UniPDF

For files with minor structural issues — unclosed tags, minor spec violations, or improperly structured metadata — UniPDF can often recover them by reading the file and writing it back out. This regenerates the internal PDF structure cleanly without Ghostscript.

package main

import (

"fmt"

"os"

"github.com/unidoc/unipdf/v4/common/license"

"github.com/unidoc/unipdf/v4/model"

)

func init() {

err := license.SetMeteredKey(os.Getenv("UNIDOC_LICENSE_API_KEY"))

if err != nil {

panic(err)

}

}

func repairPDF(inputPath, outputPath string) error {

f, err := os.Open(inputPath)

if err != nil {

return fmt.Errorf("open failed: %w", err)

}

defer f.Close()

r, err := model.NewPdfReader(f)

if err != nil {

// If parsing fails here, run Ghostscript normalization first (Step 2)

return fmt.Errorf("parsing failed; normalize with Ghostscript first: %w", err)

}

isEncrypted, err := r.IsEncrypted()

if err != nil {

return fmt.Errorf("could not check encryption status: %w", err)

}

if isEncrypted {

return fmt.Errorf("encrypted PDF cannot be repaired without decryption")

}

w, err := r.ToWriter(nil)

if err != nil {

return fmt.Errorf("writer creation failed: %w", err)

}

if err := w.WriteToFile(outputPath); err != nil {

return fmt.Errorf("write failed: %w", err)

}

fmt.Println("Repaired PDF saved to:", outputPath)

return nil

}

Important: UniPDF cannot guarantee a fix for every file. If NewPdfReader returns a parse error, run Ghostscript normalization first (Step 2), then retry with UniPDF.

Step 4: Build a Resilient Recovery Chain

For a production pipeline, chain these steps together with structured error handling so your service degrades gracefully — routing unrecoverable files to a review queue rather than failing hard or silently producing corrupted output:

func processPDF(inputPath string) error {

// Attempt 1: load directly

_, err := loadPDF(inputPath)

if err == nil {

return handlePDF(inputPath)

}

fmt.Printf("Direct load failed (%v), attempting Ghostscript normalization\n", err)

normalized := inputPath + ".normalized.pdf"

if gsErr := normalizeWithGhostscript(inputPath, normalized); gsErr != nil {

return fmt.Errorf("Ghostscript normalization failed: %w", gsErr)

}

// Attempt 2: load after normalization

_, err = loadPDF(normalized)

if err == nil {

return handlePDF(normalized)

}

// Attempt 3: route to manual review or trigger regeneration from source

return fmt.Errorf("PDF unrecoverable, route to manual review or regenerate from source: %w", err)

}

This try → normalize → retry → escalate pattern keeps your pipeline running without silent failures or unhandled panics.

Step 5: Regenerate from Source When Recovery Is Not Possible

If a PDF cannot be recovered programmatically, the most reliable path is regeneration. If your pipeline has access to the original source data such as a database record, a structured export, or a template, use UniPDF to generate a fresh, spec-compliant PDF rather than trying to repair badly damaged content.

UniPDF supports full PDF generation in Go, including reports and statements, digital signatures, form creation and flattening, and structured documents with tables and accessibility tagging. Building a generation fallback means your pipeline has a clean exit path for files that cannot be repaired, rather than a dead end.

This is especially relevant for document workflows tied to revenue or compliance — the use cases where a broken PDF cannot simply be dropped.

Preventing Corruption at Ingestion: Pipeline Hardening

The most effective repair strategy is catching bad files before they propagate through your workflow.

Validate at ingestion. Run NewPdfReader and GetNumPages at the point of upload or receipt. Reject or quarantine files that fail immediately, rather than discovering the problem after downstream processing has already run.

Log parse errors with context. Include source system, file size, and error message. Patterns in your logs will identify which upstream systems are consistently sending malformed files — often a legacy exporter or a third-party integration that needs correcting at the source.

Use Ghostscript as a pre-processing step for known-bad sources. If a specific upstream system reliably produces marginally non-compliant PDFs, normalize everything from that source before it enters your main pipeline rather than handling failures reactively.

Treat zero-page PDFs as corrupt. A file that parses without error but reports zero pages should be treated the same as a parse failure. Route it to normalization or manual review.

Further Reading

If you are building broader document workflows in Go beyond corruption handling, these resources cover related capabilities in UniPDF:

- PDF Digital Signatures in Go — how to sign and validate PDFs programmatically

- How to Protect PDF Files with Go — password protection and permission control for secure document distribution

- PDF Redaction with Go — how to permanently remove sensitive content before documents leave your system

- UniPDF API Reference — full method signatures used in this article

- UniPDF Getting Started Guide — setting up UniPDF in your project for the first time

- UniPDF GitHub examples repository — runnable Go code across all major use cases

Conclusion

Corrupted PDFs are an operational reality in any document pipeline processing files at scale. The right approach is not manual file repair. It is building a pipeline that detects, normalizes, and recovers from bad files automatically, and escalates cleanly when recovery is not possible.

UniPDF gives Go teams a production-grade library for reading, rewriting, and generating PDFs with no JVM, no native binary, and no external sidecar. It deploys as part of your Go binary and runs wherever your application does. Starting from UniPDF v4.9.0 you can fix corrupted PDFs similar to how Ghostscript does, by enabling the ParserAutoRepair option in ReaderOpts.

For teams in regulated industries where document failures have compliance or operational consequences such as financial services, healthcare, legal, and government-adjacent workflows, reliable PDF handling is not a convenience feature. It is a pipeline requirement.

Start building with UniPDF — request a free trial or explore the docs.